Motion Clouds: Model-based stimulus synthesis of natural-like random textures for the study of motion perception

Abstract

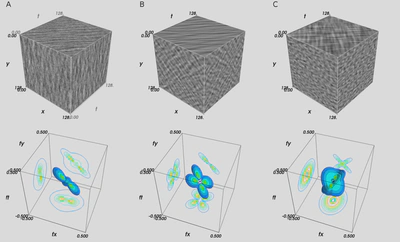

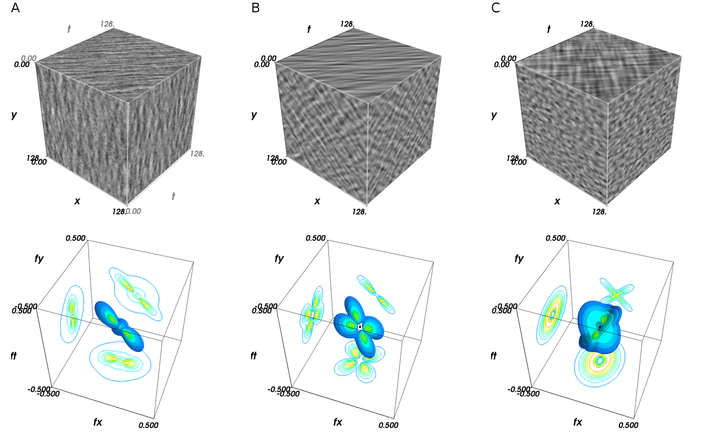

Choosing an appropriate set of stimuli is essential to characterize the response of a sensory system to a particular functional dimension, such as the eye movement following the motion of a visual scene. Here, we describe a framework to generate random texture movies with controlled information content, i.e., Motion Clouds. These stimuli are defined using a generative model that is based on controlled experimental parametrization. We show that Motion Clouds correspond to dense mixing of localized moving gratings with random positions. Their global envelope is similar to natural-like stimulation with an approximate full-field translation corresponding to a retinal slip. We describe the construction of these stimuli mathematically and propose an open-source Python-based implementation. Examples of the use of this framework are shown. We also propose extensions to other modalities such as color vision, touch, and audition.

- Web-site

- Source code using Python.

- 37 citations on Google Scholar (last updated 22/10/2021)

- Supplementary information

- Follow-up paper(2018). Bayesian Modeling of Motion Perception using Dynamical Stochastic Textures. Neural Computation.(2015). Biologically Inspired Dynamic Textures for Probing Motion Perception. Advances in Neural Information Processing Systems.

- This library was notably used in the following paper(2012). More is not always better: dissociation between perception and action explained by adaptive gain control. Nature Neuroscience.