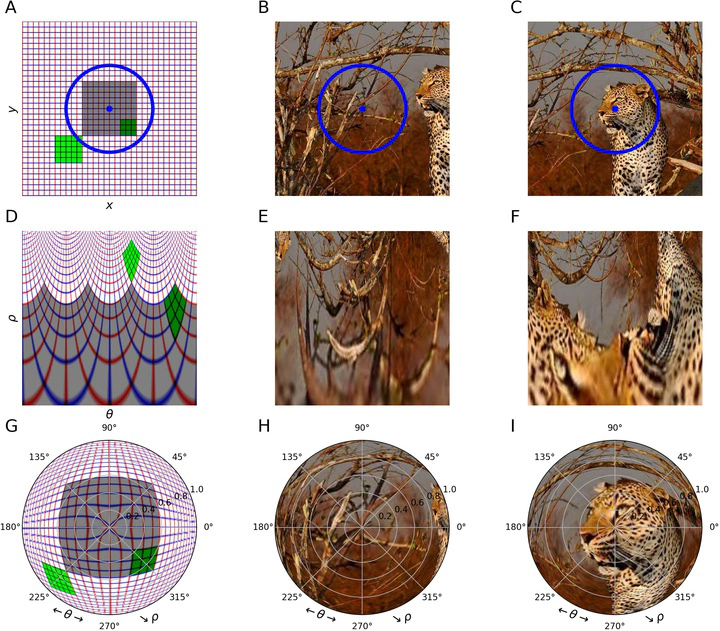

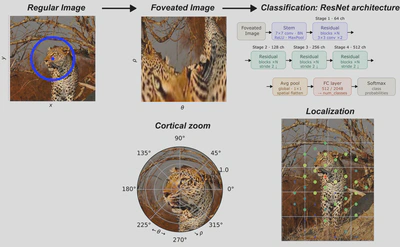

From falcons spotting prey to humans recognizing faces, the ability to rapidly process visual information depends on a foveated retinal organization that provides high-acuity central vision while preserving low-resolution peripheral vision. This organization is conserved along early visual pathways, yet remains under-explored in machine learning. Here, we examine the impact of embedding a foveated retinotopic transformation as a preprocessing layer on convolutional neural networks (CNNs) for image classification. By applying a log-polar mapping to off-the-shelf models and retraining them, we achieve comparable accuracy while improving robustness to scale and rotation. We demonstrate that this architecture is highly sensitive to shifts in the fixation point and that this sensitivity provides an effective proxy for defining saliency maps that facilitate object localization. Our results demonstrate that foveated retinotopy encodes prior geometric knowledge, providing a solution for visual searches and a meaningful classification robustness and localization trade-off. These findings provides a proof of concept in order to connect principles of biological vision with artificial networks, suggesting new, robust and efficient approaches for computer vision systems.