Open Science

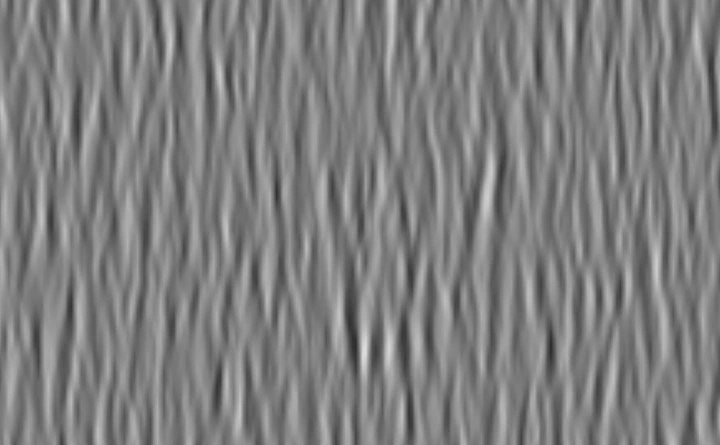

Snapshot of a Motion Cloud

Snapshot of a Motion CloudTo enable the dissemination of the knowledge that is produced in our lab, we share all source code with open source licences. This includes code to reproduce results obtained in papers (e.g. (Perrinet, Adams and Friston, 2015), (Perrinet and Bednar, 2015), (Khoei et, 2017), (Perrinet, 2019), (Pasturel et al, 2020), (Dauce et al, 2020)) or courses and slides (e.g. 2019-04-03: vision and modelization, 2019-04-18_JNLF, …) and also the development of the following libraries on GitHub.

HD natural images database for sparse coding

A dataset of natural images, acquired with a Canon EOS6D and Canon EOS650. It has been curated to facilitate research, namely in sparse coding at the moment, but can be used for future endeavors. Maintainer: Hugo Ladret.

- get the dataset

- See the preprint publication @(2023). Convolutional Sparse Coding is improved by heterogeneous uncertainty modeling. ICLR 2023 SNN Workshop.

Bayesian Change Point

A python implementation of Adams & MacKay 2007 “Bayesian Online Changepoint Detection” for binary inputs in Python.

- Source code

- See the final publication @

ANEMO: Quantitative tools for the ANalysis of Eye MOvements

This implementation proposes a set of robust fitting methods for the extraction of eye movements parameters.

- Source code

- See a poster @ Pasturel, Montagnini and Perrinet (2018)

- This library was used in the following publication @

LeCheapEyeTracker

Work-in-progress : an eye tracker based on webcams.

Biologically inspired computer vision ( Python)

SLIP: a Simple Library for Image Processing

This library collects different Image Processing tools for use with the LogGabor and SparseEdges libraries.

LogGabor: a Simple Library for Image Processing

This library defines the set of LogGabor kernels. These are generic edge-like filters at different scales, phases and orientations. The library develops a simple method to construct a simple multi-scale linear transform.

- Web-site

- Source code

- This library is detailed in the following publication

- LogGabor filters are used in numerous computer vision applications and reaches 177 citations on Google Scholar (last updated 22/10/2021).

SparseEdges: sparse coding of natural images

Our goal here is to build practical algorithms of sparse coding for computer vision.

This class exploits the SLIP and LogGabor libraries to provide with a sparse representation of edges in images.

- Web-site

- Source code

- This algorithm was presented in the following paper, which is available as a reprint

- It was notably used in the following paper

Sparse Hebbian Learning : unsupervised learning of natural images

This is a collection of python scripts to test learning strategies to efficiently code natural image patches. This is here restricted to the framework of the SparseNet algorithm from Bruno Olshausen (http://redwood.berkeley.edu/bruno/sparsenet/).

- Source code

- This algorithm was presented in the following paper

- 54 citations on Google Scholar (last updated 22/10/2021)

- Follow-up paper

MotionClouds

MotionClouds are random dynamic stimuli optimized to study motion perception.

- Web-site

- Source code using Python.

- This algorithm was presented in the following paper

- 3746 citations on Google Scholar (last updated 04/09/2025)

- examples of use: https://laurentperrinet.github.io/sciblog/categories/motionclouds.html

- Follow-up paper(2018). Bayesian Modeling of Motion Perception using Dynamical Stochastic Textures. Neural Computation.

- This library was notably used in the following papers:(2012). More is not always better: dissociation between perception and action explained by adaptive gain control. Nature Neuroscience.(2019). Speed-Selectivity in Retinal Ganglion Cells is Sharpened by Broad Spatial Frequency, Naturalistic Stimuli. Scientific Reports.(2023). Cortical recurrence supports resilience to sensory variance in the primary visual cortex. Nature Communications Biology.

PyNN

PyNN is a simulator-independent language for building neuronal network models using Python.

- Web-site

- Source code

- This algorithm was presented in the following paper

- 619 citations on Google Scholar (last updated 22/10/2021)