Next-generation neural computations

Brains are not like computers. Our brains can quickly and easily spot familiar objects, like keys in a messy room, with very little effort. In contrast, even the best computers struggle to do this as fast or efficiently. This difference shows just how much more we need to learn about how our brains work to create smarter artificial intelligence.

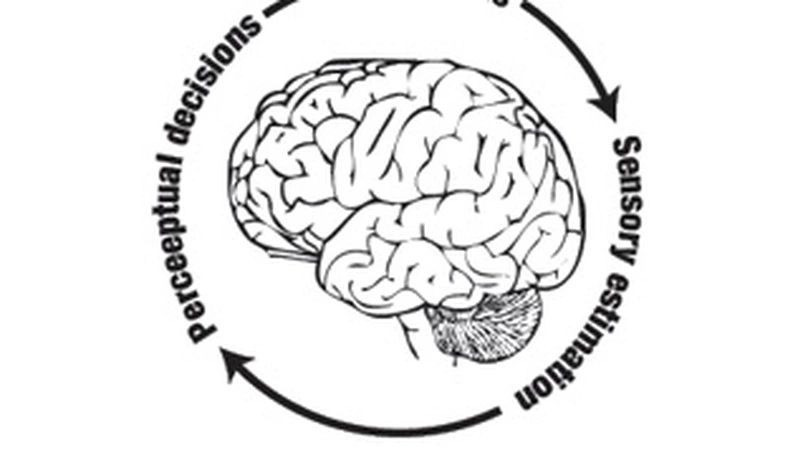

To bridge the gap between neuroscience and Artificial Intelligence (AI), I seek to harness the efficiency of vision by understanding how neural computations govern sensory processes like vision and behavioral responses like eye movements.

Follow me on

Biography

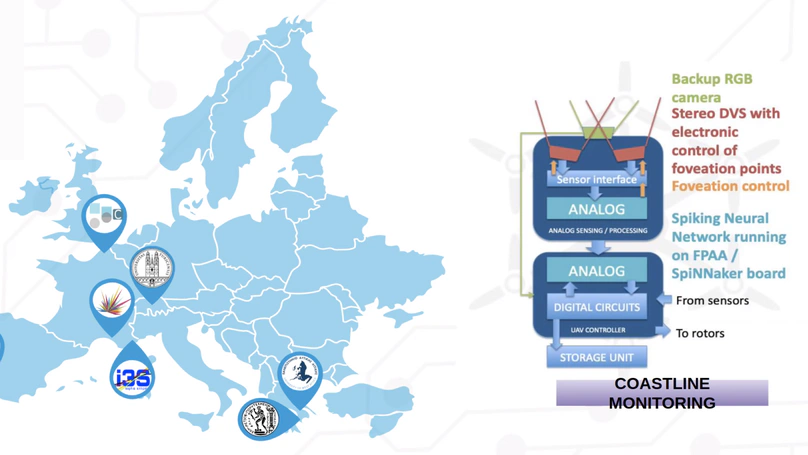

Laurent Perrinet is a computational neuroscientist (DR2 CNRS) at the Institut de Neurosciences de la Timone (UMR 7289, CNRS / Aix-Marseille Université), within the NeOpTo team. His research investigates predictive processing in the visual system — from single cortical cells to active vision and behavior — and its translation into neuromorphic algorithms. He has co-authored more than 63 peer-reviewed articles (h-index 30), supervised 6 completed PhD students and currently directs 3 PhD students (Alexandre Lainé, Matthis Dallain, Kevin Mairot). His work combines neurophysiology (Neuropixels recordings in marmoset), computational modeling (spiking neural networks, Free-Energy Principle) and open-source algorithmic development (MotionClouds, AnEMo, LogGabor).

- Predictive Processing & Active Inference

- Spiking Neural Networks & Neuromorphic Computing

- Computational Vision & Eye Movements

Habilitation à diriger des recherches, 2017

Aix-Marseille Université

PhD. in Cognitive Science, 2003

Université P. Sabatier, Toulouse, France

M.S. in Engineering, 1998

SupAéro, Toulouse, France

Lastest Publications

- See the accompanying code: https://github.com/laurentperrinet/MNESIS

- The code and results at the time of the presentation is accessible in this commit

- see a follow-up:(2026). Working Memory in Recurrent Spiking Neural Networks Using Heterogeneous Synaptic Delays. Seminar at CerCo.

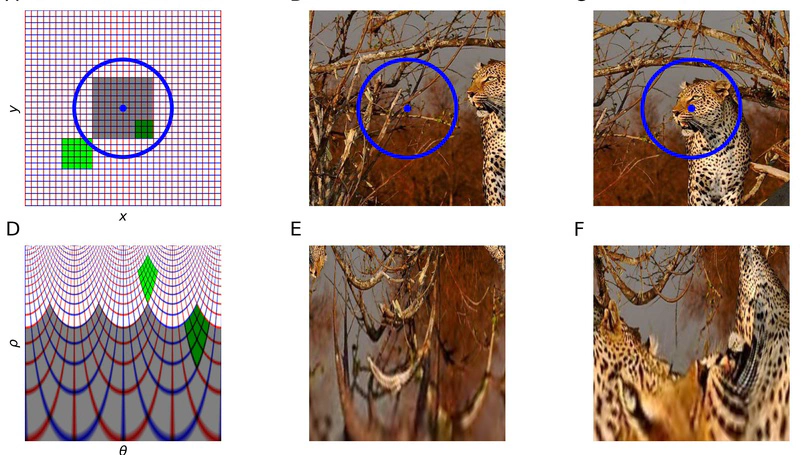

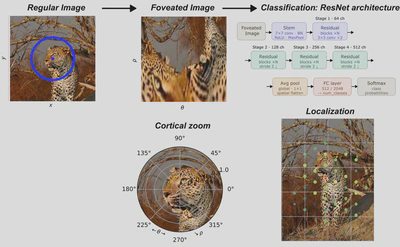

From falcons spotting prey to humans recognizing faces, the ability to rapidly process visual information depends on a foveated retinal organization that provides high-acuity central vision while preserving low-resolution peripheral vision. This organization is conserved along early visual pathways, yet remains under-explored in machine learning. Here, we examine the impact of embedding a foveated retinotopic transformation as a preprocessing layer on convolutional neural networks (CNNs) for image classification. By applying a log-polar mapping to off-the-shelf models and retraining them, we achieve comparable accuracy while improving robustness to scale and rotation. We demonstrate that this architecture is highly sensitive to shifts in the fixation point and that this sensitivity provides an effective proxy for defining saliency maps that facilitate object localization. Our results demonstrate that foveated retinotopy encodes prior geometric knowledge, providing a solution for visual searches and a meaningful classification robustness and localization trade-off. These findings provides a proof of concept in order to connect principles of biological vision with artificial networks, suggesting new, robust and efficient approaches for computer vision systems.

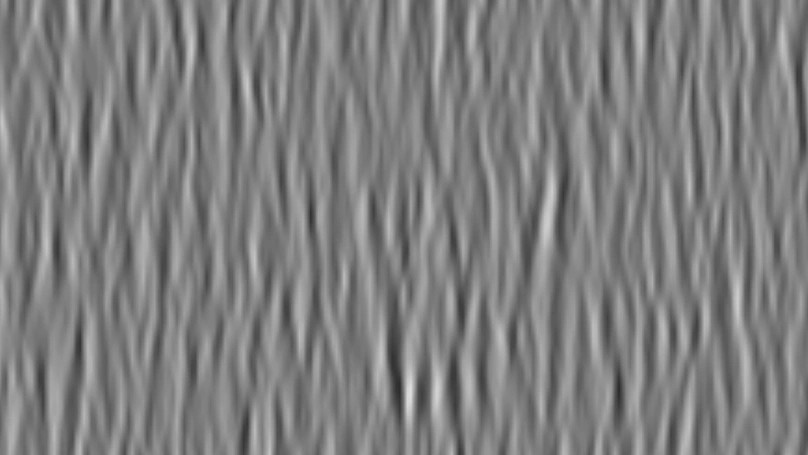

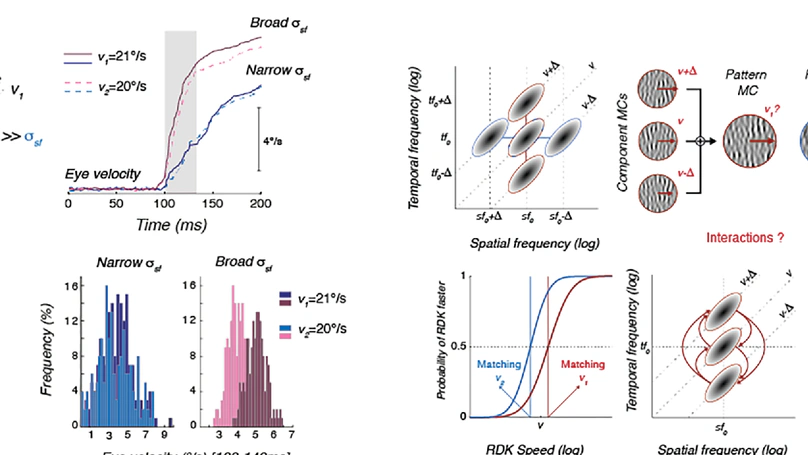

The visual systems of animals work in diverse and constantly changing environments where organism survival requires effective senses. To study the hierarchical brain networks that perform visual information processing, vision scientists require suitable tools, and Motion Clouds (MCs)—a dense mixture of drifting Gabor textons—serve as a versatile solution. Here, we present an open toolbox intended for the bespoke use of MC functions and objects within modeling or experimental psychophysics contexts, including easy integration within Psychtoolbox or PsychoPy environments. The toolbox includes output visualization via a Graphic User Interface. Visualizations of parameter changes in real time give users an intuitive feel for adjustments to texture features like orientation, spatiotemporal frequencies, bandwidth, and speed. Vector calculus tools serve the frame-by-frame autoregressive generation of fully controlled stimuli, and use of the GPU allows this to be done in real time for typical stimulus array sizes. We give illustrative examples of experimental use to highlight the potential with both simple and composite stimuli. The toolbox is developed for, and by, researchers interested in psychophysics, visual neurophysiology, and mathematical and computational models. We argue the case that in all these fields, MCs can bridge the gap between well- parameterized synthetic stimuli like dots or gratings and more complex and less controlled natural videos.

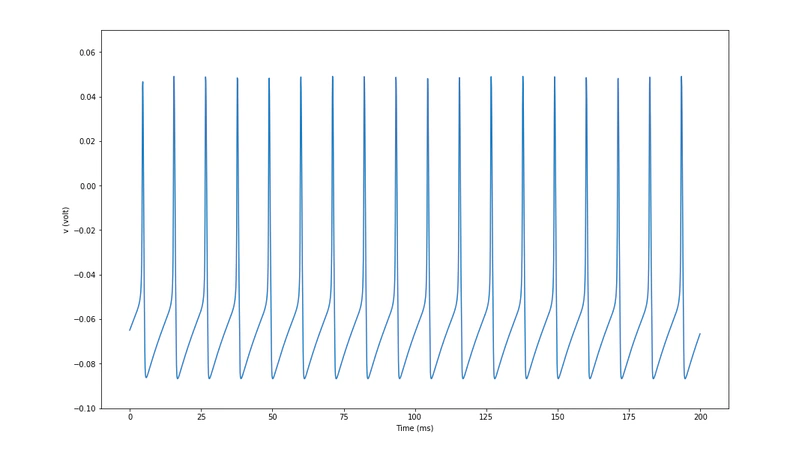

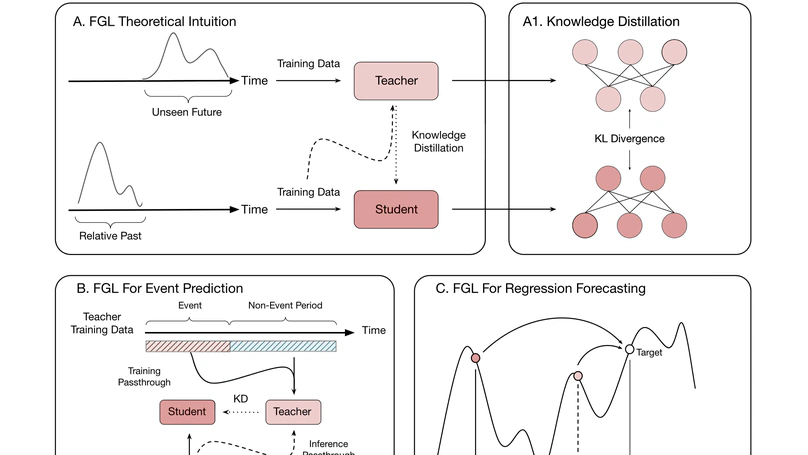

Accurate time-series forecasting is essential across a multitude of scientific and industrial domains, yet deep learning models often struggle with challenges such as capturing long-term dependencies and adapting to drift in data distributions over time. We introduce Future-Guided Learning, an approach that enhances time-series event forecasting through a dynamic feedback mechanism inspired by predictive coding. Our approach involves two models: a detection model that analyzes future data to identify critical events and a forecasting model that predicts these events based on present data. When discrepancies arise between the forecasting and detection models, the forecasting model undergoes more substantial updates, effectively minimizing surprise and adapting to shifts in the data distribution by aligning its predictions with actual future outcomes. This feedback loop, drawing upon principles of predictive coding, enables the forecasting model to dynamically adjust its parameters, improving accuracy by focusing on features that remain relevant despite changes in the underlying data. We validate our method on a variety of tasks such as seizure prediction in biomedical signal analysis and forecasting in dynamical systems, achieving a 40% increase in the area under the receiver operating characteristic curve (AUC-ROC) and a 10% reduction in mean absolute error (MAE), respectively. By incorporating a predictive feedback mechanism that adapts to data distribution drift, Future-Guided Learning offers a promising avenue for advancing time-series forecasting with deep learning.

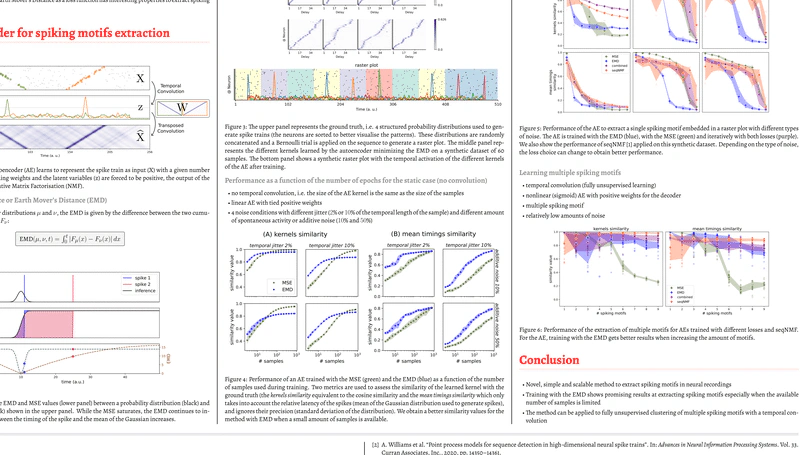

Temporal sequences are an important feature of neural information processing in biology. Neurons can fire a spike with millisecond precision, and, at the network level, repetitions of spatiotemporal spike patterns are observed in neurobiological data. However, methods for detecting precise temporal patterns in neural activity suffer from high computational complexity and poor robustness to noise, and quantitative detection of these repetitive patterns remains an open problem. Here, we propose a new method to extract spike patterns embedded in raster plots using a 1D convolutional autoencoder with the Earth Mover’s Distance (EMD) as a loss function. Importantly, the properties of the EMD make the method suitable for spike-based distributions, easy to compute, and robust to noise. Through gradient descent, the autoencoder is trained to minimize the EMD between the input and its reconstruction. We then expect the weight matrices to learn the repeating spike patterns present in the data. We validate our method on synthetically generated raster plots and compare its performance with an autoencoder trained using the Mean Squared Error (MSE) as a loss function. We show that the method using the EMD performs better at detecting the occurrence of the spike patterns, while the method using the MSE is better at capturing the underlying distributions used to generate the spikes. Finally, we propose to train the autoencoder iteratively by sequentially combining the EMD and the MSE losses. This sequential approach outperforms the widely used seqNMF method in terms of robustness to various types of noise, speed and stability. Overall, our method provides a novel approach to reliably extract repetitive temporal spike sequences, and can be readily generalized to other sequence detection applications.

Recent Events

We are recruiting a PhD student to work on neuromodulatory control of predictive processing in mouse vision, co-supervised by Ede Rancz (INMED) and myself.

Project Overview

This CENTURI project combines:

- Computational modeling (spiking and normative models)

- In vivo electrophysiology, imaging, behavior, and optogenetics

- Investigation of how serotonin and noradrenaline shape prediction error signaling during sensory-motor mismatch

Candidate Profile

- Motivated individual with a background in neuroscience or computational sciences

- Experience in either experimental in vivo work or modeling (Python, neural networks)

- Interest in working at the interface of theory and biology

Application Details

📄 Full project description 📬 Application form :rainbow_heart: We welcome applicants from all backgrounds and celebrate diversity and free creative thinking in our research environment.

Projects

Publications

Recent & Upcoming Talks

Grants

Contact

How to reach me

- laurent.perrinet@univ-amu.fr

- +33 619 478 120

- Institut de Neurosciences de la Timone (UMR 7289), Aix Marseille Université - CNRS, Faculté de Médecine - Bâtiment Neurosciences, 27, Bd Jean Moulin, Marseille, PACA 13385 Marseille Cedex 05

- When you reach the INT building, take the stairs to the second floor, then enter the open space on the left and follow it to the end of the room; my office is on the right-hand side.

- OrcID

- mastodon

- Bluesky

- openAlex

- ResearcherID

- NeuroTree

- Google Scholar

- Zotero

- Publons

- arXiv

- GitHub

- pixelfed

- stackoverflow

- LastFM

![Saccade selection method: (a.) The input image of dimensionH× Wis split intoH16×Wnsized patches and embeddedinto token vectors. (b.) The tokens are passed through the DINO transformer, and attention flow from patch tokens to [CLS]token (white arrows) are extracted and reshaped into one attention map per attention-head. (c.) The multiple attention maps arefused into one by taking the maximum value across heads. (d.) The highest-attention locations define square regions(“saccades”) whose tokens are retained. (e.) Selected regions are revealed sequentially, and the image variants are classified bya pre-trained linear head.](/publication/dallain-26/saccade_selection_hu_a552a3a1b7e1914d.webp)